1. TV Station’s Viewers Irate over Choice of Music and Sound Effects Scott Schaffer, of ABC’s WNEP affiliate in PA. launches into the topic of sound effects during the segment “Talkback 16” in which the station responds to viewers’ feedback. In this particular segment, the issues defined as “critical topics” some WNEP callers relate to […]

Year: 2018

Temp Tracks: A Movie’s Secret Score

Selecting ‘temp music’ tracks is an essential part of the overall scoring process in film making. Yet its importance is often overlooked. In this article, I explain exactly what temp music is and the role it plays in everything from a low budget short films to a major Hollywood feature. A Temporary Definition First of […]

Sound Effects and the Fake Engine Roar

Over the years, the auto industry has increasingly honed their craft at creating environmentally sound cars and reducing unwanted noise levels for the drivers. As a result, the authentic organic engine sounds is masked more and more. For car aficionados who may buy vehicles specifically for the engine roar, this is not necessarily a good […]

Sound Design Founders of the Theatre

1. The Theatre’s Contribution to Sound Design As with any human discipline or industry, sound design as a practice and art form developed collectively over time, spurred on by the contributions of many and the striking visions and passion of leaders in the field. Below are two major contributors to the world of sound design […]

Order #222222 placed at Shockwave-Sound.com

It’s always a bit of fun when you reach little milestones with your business, and we are happy to announce that a couple of days ago, order # 222222 was placed here at Shockwave-Sound.com. That’s since we started our current order database in April of 2005. (We had ‘manual’ order counting from 2000 till 2005). […]

Gangster Flick, Vol. 1

Shockwave-Sound.com proudly presents the brand new collection of stock music: “GANGSTER FLICK, VOL. 1“: An amazing collection of royalty-free music available for immediate licensing, download, and legal use in your own productions such as (but not limited to) Trailers, Videos, Games, Podcasts, Audio dramas, TV, Film, Apps, Installations and more. Tracks composed by Felipe Vassão, […]

Hans Jenny and The Sound Matrix

Cymatics and the Sound Matrix Humans have long considered sound and music as mystical and magical, whether worshipped by the ancients and embedded in political and culture in ancient China, regarded as a portal to the infinite by Buddhists chanting OM, or modern day musicians and sound designers revelling in and revering their own sound […]

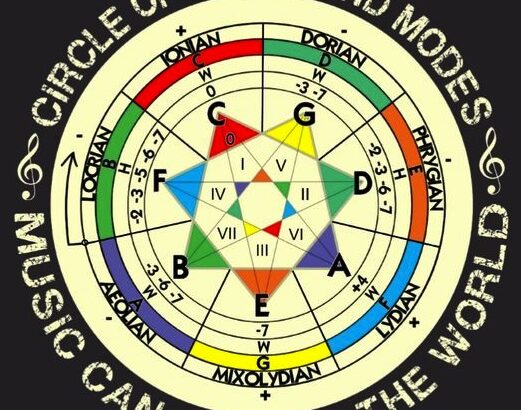

Music of the Minor Modes

The prior post concerned the three major modes which are designated by their major 5ths. The minor modes are similarly designated by their minor 5ths. Each has a unique history and flavor, but they share the familiar minor darkness of emotion in common. From the bittersweetness of the Dorian, the tense power of the Phrygian, […]

Unintentionally best-kept secrets at Shockwave-Sound

Hi all, we would like to bring some attention to a couple of features / offers that we have here at Shockwave-Sound, but that are perhaps not being made visible enough: Bulk offers on downloadable CD-collections Our pre-packaged, ready-made, downloadable album collections of stock music and royalty free music (and also sound-effects) are typically […]

The Secret Power of Music: The Modern – Part II

Welcome to Part II of this blog discussion on David Tame’s The Secret Power of Music. Part I explained Tame’s main point of the initial part of his book. Namely, that music among the ancients, that philosophies that can be traced up to the present time, was considered an essential part of the source of […]