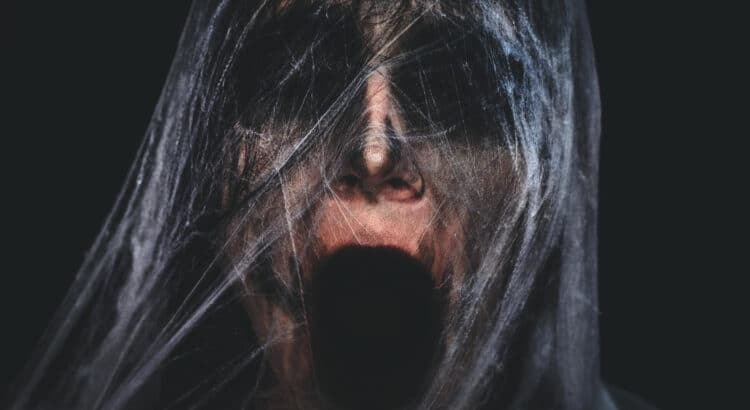

From Lynne Sound FX we have a new package of 26 truly spine chilling Scifi/Horror Background Sounds posted on the site today. These freshly recorded and created, grisly sound effects were created by a combination of human voices, synthesizers, and various effects and techniques to create 26 evil, malevolent, foreboding sounds. These are not short […]

Tag: Sound design

Sound Oddities, part 1

1. TV Station’s Viewers Irate over Choice of Music and Sound Effects Scott Schaffer, of ABC’s WNEP affiliate in PA. launches into the topic of sound effects during the segment “Talkback 16” in which the station responds to viewers’ feedback. In this particular segment, the issues defined as “critical topics” some WNEP callers relate to […]

Sound Effects and the Fake Engine Roar

Over the years, the auto industry has increasingly honed their craft at creating environmentally sound cars and reducing unwanted noise levels for the drivers. As a result, the authentic organic engine sounds is masked more and more. For car aficionados who may buy vehicles specifically for the engine roar, this is not necessarily a good […]

Sound Design Founders of the Theatre

1. The Theatre’s Contribution to Sound Design As with any human discipline or industry, sound design as a practice and art form developed collectively over time, spurred on by the contributions of many and the striking visions and passion of leaders in the field. Below are two major contributors to the world of sound design […]

Hans Jenny and The Sound Matrix

Cymatics and the Sound Matrix Humans have long considered sound and music as mystical and magical, whether worshipped by the ancients and embedded in political and culture in ancient China, regarded as a portal to the infinite by Buddhists chanting OM, or modern day musicians and sound designers revelling in and revering their own sound […]

The Secret Power of Music: The Modern – Part II

Welcome to Part II of this blog discussion on David Tame’s The Secret Power of Music. Part I explained Tame’s main point of the initial part of his book. Namely, that music among the ancients, that philosophies that can be traced up to the present time, was considered an essential part of the source of […]

Sound and Sculpture: Sound and Architecture

Artists draw inspiration from everything. The entire world around them and the human relationships they have are all sources of experience that provide the meaning they need to express. One powerful source of expression for the visual art is sound and music, the topic of this post. Below are some beautifully intricate creations inspired by […]

Harnessing the Power of Sound: Behavior and Invention

Sound is a force of nature that has its own special and unique properties. It can used artistically to create music and soundscapes and is a vital part of human and animal communication, allowing us to develop language and literature, avoid danger, and express emotions. In addition, understanding and harnessing the unique properties of sound […]

Full Sail University and Point Blank School: Real Alternatives to Traditional Post-Secondary Education

Traditionally, post-secondary education in Western culture for years has been hinged on both parents and students desiring education that “rounds out” the student’s mind, ie a “liberal education.” This concept of the liberal education still commands the trajectory of many high-achieving students who graduate high school in the US, and gymnasium in Europe. The goal, […]

Surround Music in video games – we consider the viability and aesthetics

Considering the Aesthetics of Surround Music in Games- By Rob Bridgett Surround Music: The Emperor’s New Clothes? There has been much talk recently, predominantly among composers, dedicated to the virtues and benefits of surround music in video games. Certainly with the increased memory capacity of next-generation platforms, and a greatly increased install-base of surround […]